3D-Computer-Vision

Modalities

Lecture TypeLecturer | Scope | Place | Dates | Start |

| Lecture Prof. Dr. Christian Wöhler | 2 Unit(s) | P1-O4-207 | Thursday 10:00 - 12:00 o'clock | 16th of April 2025 |

| Exercise M.Sc. Moritz Tenthoff | 1 Unit(s) | P1-O1-108 | Thursday 12:00 - 13:00 o'clock | 23th of April 2025 |

Acquisition of credit points in the ECTS

5 credits upon successful participation in the exam.

General Overview

This lecture deals with methods of 3D image processing, meaning the image-based three-dimensional reconstruction of natural scenes and objects. The lecture begins with an introduction to spatial geometry based on linear algebra, the theory of optical imaging and basic methods of calibrating camera systems. This is followed by an overview of the three-dimensional reconstruction of scenes with photogrammetric methods based on several recordings, in particular with the classic method of bundle adjustment. In addition, an introduction to methods for determining the three-dimensional position and orientation of objects ("pose estimation") on the basis of geometry models is given. Furthermore, the three-dimensional reconstruction of the surface of objects based on their physical properties (e.g. shape from shading) is topic of the lecture. Practical application examples from current research illustrate each of the subject areas considered.

Part I: Triangulation based methods

In the first part of the lecture, the basics of optical imaging and imaging errors, in particular sharpness, color and distortion errors of lenses, are considered. At the beginning of every image-based 3D scene reconstruction there is the calibration of the camera. For this reason, an introduction to methods of camera calibration using a calibration body of known geometry is given.

One of the most important methods of three-dimensional scene reconstruction is stereo image analysis, which is based on the evaluation of image pairs of a scene. The positions of image points belonging to scene features are determined in both images; Then the three-dimensional structure of the scene features is determined by triangulation. At this point, a brief introduction to the concept of projective geometry, which is quite helpful from a mathematical point of view, is given. The stereo image analysis, like more complex multiocular three-dimensional reconstruction methods, is based on the formation of mutual correspondences between points in the images of the scene. For this, it must be known, for example, which points in Figure 1 correspond to which points in Figure 2, i. H. which pixels belong to the same physical object or part of the object in the scene.

A generalization of stereo image analysis to basically any number of cameras that view the scene from different positions is what is known as bundle adjustment. This is the standard method of photogrammetry, which enables the simultaneous determination of both internal and external camera parameters as well as the three-dimensional structure of the scene from several images recorded from different locations.

The following is an overview of methods for determining the three-dimensional position and orientation of objects from one or more images, which is also referred to as pose estimation. It is assumed that a geometry model of the object in question is available.

Part II: Intensity based methods

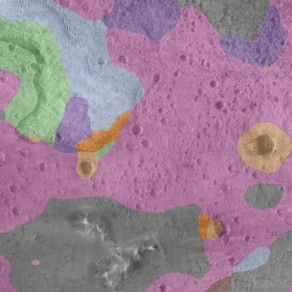

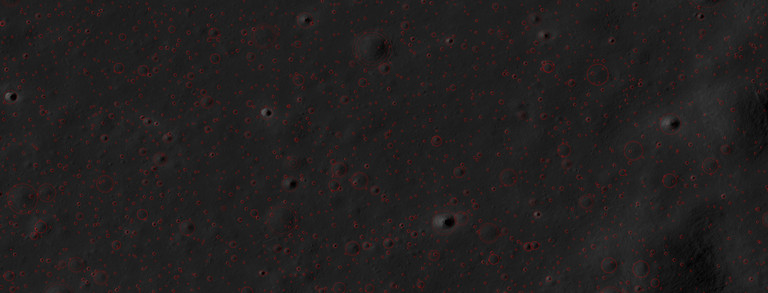

Intensity-based methods aim to derive the three-dimensional structure of an object from the intensity distribution in the image. First of all, the most important radiometric quantities are introduced, and the image formation process is reproduced from a physical point of view. This requires knowledge of how incident light is scattered or reflected on the object surface. In this way, surface gradients and, from them, height profiles along image lines can be determined in a very simple way (photoclinometry). Using the photometric stereo method, a complete depth image can be derived from several images of a scene under different lighting conditions.

If only a single image is available, there are an infinite number of solutions to the reconstruction problem. Due to the requirement that the reconstructed surface or its gradients must meet certain conditions (e.g. smoothness of the surface, integrability of the surface gradients), a complete depth image of the surface can also be obtained from a single image under certain conditions.

Exercises

In the course of the lecture, exercises are held in which selected procedures previously dealt with in the lecture are to be implemented by the participants in MATLAB on the basis of practical application examples.

You need to register for the Moodle-Room in order to access the data. You can register for the Moodle-room by registering for the course in the LSF.